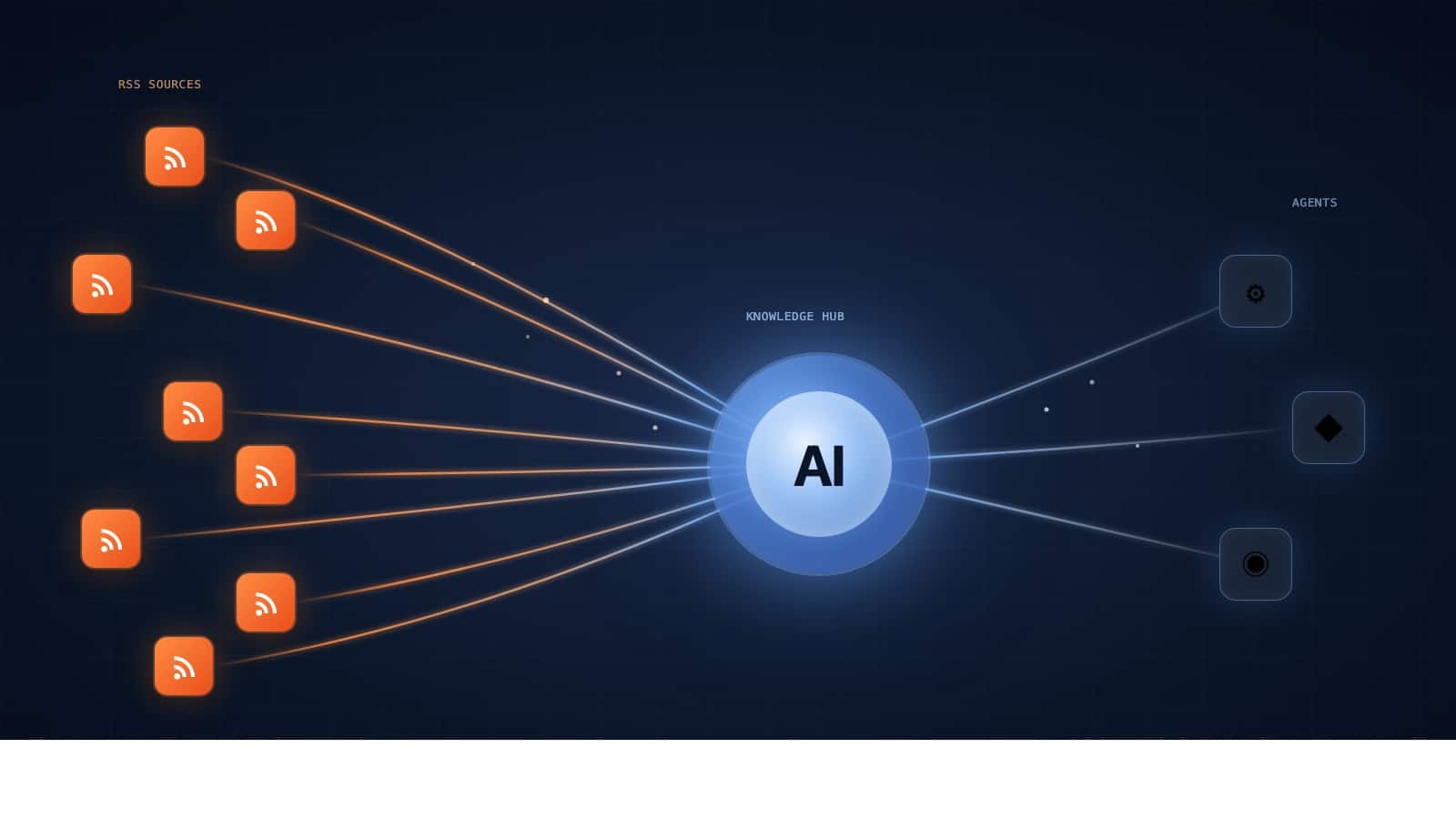

I didn’t set out to run an AI agency. I was a WordPress entrepreneur for over a decade, building products like WP Mayor, WP RSS Aggregator, and the Spotlight plugin. Then around 2023, I started building AI agents for my own businesses, got serious results, and realised there was a category-level opportunity in helping other businesses do the same thing. That became AgentVania.

What follows is not a “how to start an AI agency” guide. There are plenty of those online, most written by people who’ve never actually run one. This is what I’ve observed after doing it, the parts that don’t show up in the course sales pages.

Why I Started an AI Agency

The honest reason: I saw a gap that matched what I already knew how to do.

Building software businesses taught me that the hardest part of technology is rarely the technology itself. It’s figuring out where it creates real value, translating that into something a non-technical buyer can understand and trust, and then delivering on the promise. Those skills transfer directly into AI.

The opportunity I saw specifically was that AI agents were becoming genuinely capable, but the gap between “capable in a demo” and “deployed reliably in a business” was enormous. Most business owners I spoke to either dismissed AI entirely or had unrealistic expectations of what it could do. Very few had the combination of technical depth and business sense to bridge that gap themselves.

That gap is where an AI agency lives. And in late 2023, it was wide open.

The Gap Between Demos and Production

This is the thing that catches most new AI agency operators off guard: a demo that takes two hours to build can take two weeks to make production-ready.

Demos are designed to show the happy path. The inputs are clean, the APIs behave, the model responds as expected. You show a client a 10-minute walkthrough and they’re genuinely impressed. Then you start building for real, and you encounter the actual conditions: messy data, edge cases the model handles badly, rate limits, latency issues, integration quirks, error states nobody anticipated.

I’ve seen agents that worked flawlessly in testing fail in production because the client’s CRM had inconsistent field naming, or because the email threading format varied in ways the agent didn’t handle. These aren’t exotic problems. They’re the standard condition of any real business’s data and tooling.

The practical implication for running an agency: you have to build in much more buffer than you think. Demos sell work. Production work is where the project actually lives. Your scoping, your pricing, and your project timelines all need to reflect the production reality, not the demo.

Client Education Is Half the Job

I underestimated this completely in the first few months.

Clients arrive with one of two misconceptions. Either they think AI can do everything, including things that aren’t possible yet, and that all they need to do is hand over their problems and wait. Or they think AI is a gimmick and are only exploring it because their board or a competitor forced them to take it seriously.

Neither group knows what they actually need. The first group needs to be brought back to reality before any proposal is written, because over-promised engagements end badly for everyone. The second group needs to understand what AI agents actually are before they can evaluate whether they want one.

The clients who work out well are the ones who come in curious and honest about their constraints. They know their business, they know what’s currently painful, and they’re willing to be guided on the technical side. Those engagements produce real results. The clients who arrive with a fixed idea of what they want before any discovery has happened are usually harder.

I now front-load education heavily. Before any scoping conversation, I send material that calibrates expectations. I ask pointed questions about what “success” looks like in six months. I push back on wishlist features early, because removing scope before a project starts is far easier than removing it after a contract is signed.

The Technology Changes Every Month

This is both the most exciting and the most exhausting part of the job.

In the WordPress world, the core technology was stable. You could build expertise that compounded over years, and the fundamentals you learned in 2015 were still largely true in 2020. AI is not like this. The model capabilities that defined what was possible in January can be obsolete by March. Frameworks change, pricing structures change, what you have to build from scratch versus what a platform now handles natively changes constantly.

My approach has been to stop trying to stay on top of everything, which is impossible, and instead stay close to the primitives. Understanding how language models reason, what they’re structurally bad at, how memory and retrieval work, what the right abstraction levels are for building agents. Those fundamentals shift more slowly than the specific tools and APIs built on top of them.

In practice that means I follow a small number of researchers and builders whose signal-to-noise ratio is high and I ignore the rest. I build with new tools when a client problem genuinely requires it, not because the tool is being hyped. And I maintain a clear line between “this is interesting and I’m watching it” and “this is ready to put in a client system.”

Chasing every new release is a fast way to build nothing properly. The clients who get results from AI don’t need you to use the latest model. They need you to solve a specific problem reliably.

Pricing Is Genuinely Hard

I’m going to be direct about this because most agency content skirts around it.

Hourly pricing doesn’t work well for AI agent work. The time to build something is a poor proxy for its value, and it creates the wrong incentives. An agent that takes 40 hours to build might generate hundreds of hours of savings per month for the client. Billing by the hour undersells you badly on the projects that go well and creates scope disputes on the ones that don’t.

Pure project-based pricing is cleaner but requires you to scope accurately, which is genuinely difficult when you’re working with technology where the edge cases are hard to predict in advance. Early on, I mis-scoped projects in both directions. Quoted too low on complex integrations and had to absorb the overrun. Quoted too high on straightforward builds and lost deals to cheaper competitors.

What’s worked better is value-based framing with a structured engagement model. Start with a discovery and scoping phase that’s paid separately, before any build commitment. This does two things: it surfaces the real complexity before you’ve committed to a price, and it filters out clients who aren’t serious. Anyone who won’t pay for a scoping engagement probably isn’t the right client anyway.

For the build itself, I’ve moved toward phased project pricing with defined deliverables and acceptance criteria at each phase. The client knows exactly what they’re getting and when. You have clear points to renegotiate scope if the project evolves. It’s less elegant than a single project price, but it reflects the reality of how this work actually unfolds.

The Skills That Matter Most

It’s not coding ability, though being able to read and write code helps.

The single most valuable skill in this work is understanding business processes. Before you can build something useful, you need to understand exactly how a task gets done today, where the friction is, what the error rates are, and what “good enough” looks like. Most of that understanding comes from asking the right questions and listening carefully, not from technical knowledge.

The second is knowing when not to use AI. I’ve turned down projects where a client wanted an AI agent built for something that would be better served by a simple automation or, in a couple of cases, just a different process entirely. Recommending against your own service is uncomfortable, but clients remember it. The long-term relationship is worth more than one bad-fit project.

Third is communication. Writing clearly, explaining technical concepts without jargon, setting expectations in writing rather than in passing conversation. When a project goes sideways, the clients who feel informed and managed well are dramatically easier to work with than the ones who feel surprised. Almost every serious project issue I’ve seen was made worse by a communication failure, not a technical one.

The technical side matters too. You need to understand how agents work, how to evaluate model performance, how integrations function. But you can learn that. Good business judgement and clear communication are harder to acquire.

What I’d Do Differently If Starting Today

Specialise earlier. The instinct when starting an agency is to take any work that comes through the door, which makes sense for cash flow but slows down the development of real depth. The agencies that stand out are the ones that own a specific vertical or problem type. “We build AI agents for e-commerce businesses” is a more compelling and more defensible position than “we build AI agents for businesses.”

Build the discovery phase into every engagement from the start, not as a premium add-on you introduce after the first few projects. It changes the dynamic of every client conversation and protects you from projects that should never have been scoped the way they were.

I’d also invest earlier in case studies and documented results. The thing that builds trust with new clients faster than anything else is specifics: not “we help businesses save time with AI” but “we built a qualification agent for a 12-person SaaS team that cut their sales team’s manual triage time by 60%.” Real numbers from real projects compound. Collecting them systematically from the beginning pays off faster than most marketing activity.

Is It Worth It?

Yes, with conditions.

The market for well-executed AI implementations is real and growing. Businesses are trying to figure out how to actually use this technology, and many of them genuinely need outside help to do it. If you understand both the technology and how businesses work, there’s meaningful demand for that combination.

But it’s not a low-effort business. The work is complex, client expectations require constant management, and the technology you’re building on evolves fast enough that you’re always partly learning on the job. It rewards people who can hold ambiguity, communicate clearly, and prioritise getting things working in the real world over getting things looking impressive in a demo.

The businesses that get the most from working with an AI agency are the ones that are honest about their problems and willing to invest in solving them properly, not the ones looking for a shortcut. If you’re thinking about whether AI is the right move for your business, that honesty is where to start.

And if you’re thinking about starting an agency yourself: the opportunity is real, but it’s a business, not a product. The hard parts are the same hard parts that apply to any professional services firm. The technology is the easy bit.

Leave a Reply