When I started using AI seriously in my businesses, I made the same mistake I see other business owners make now. I went for the tasks that felt exciting to automate rather than the ones that would save me the most time.

The result was a lot of tinkering, a few impressive demos, and not that much actual time back. It took me a while to reverse-engineer what was actually working and build from there.

This is the guide I wish I’d had at the start. Not a list of every possible thing AI can do, but a clear breakdown of what to prioritise, what to skip, and how to think about it systematically.

The Mistake: Starting With the Wrong Tasks

The temptation when you first get access to capable AI tools is to point them at your most interesting problems. The hard stuff. The complex decisions. The work that takes real thinking.

That’s backwards.

AI performs best on tasks that are high-volume, clearly defined, and don’t require judgement about things it can’t see. The exciting, complex work tends to fail the second or third of those tests. So you get mediocre output on the tasks that matter most, get frustrated, and draw the wrong conclusion that AI isn’t that useful.

The business owners who get real results start with the unglamorous stuff. The tasks that are repetitive enough to be boring, defined enough to be scripted, and numerous enough that saving time on each one actually adds up. That’s where the returns are, and they compound faster than you’d expect.

The Tasks I Actually Automated First

In practice, my first wave of automations fell into four categories.

Email triage

I run multiple businesses, which means I have multiple inboxes doing their best to claim my mornings. The first thing I automated was the categorisation and prioritisation layer. AI reads incoming email, flags what needs a response today, what can wait, and what can be handled by a templated reply or routed to someone else. I still write a lot of emails myself. But I stopped starting my day by wading through everything to figure out what matters.

Content first drafts

I have several sites producing regular written content. Before AI, a first draft was either slow (me) or expensive (a writer). Now AI produces a working first draft in minutes, given a good brief. The output still needs editing, sometimes significant editing, but starting from something is faster than starting from nothing. The time savings across a content-heavy business are substantial.

Research compilation

Before a call, a decision, or a content brief, I used to spend 20 to 40 minutes pulling background together. Now AI does that pass first. It’s not always right and it doesn’t replace deep investigation, but it means I arrive at the decision point with context already assembled. I can then spend my time on the parts that require actual judgement rather than retrieval.

Data summaries

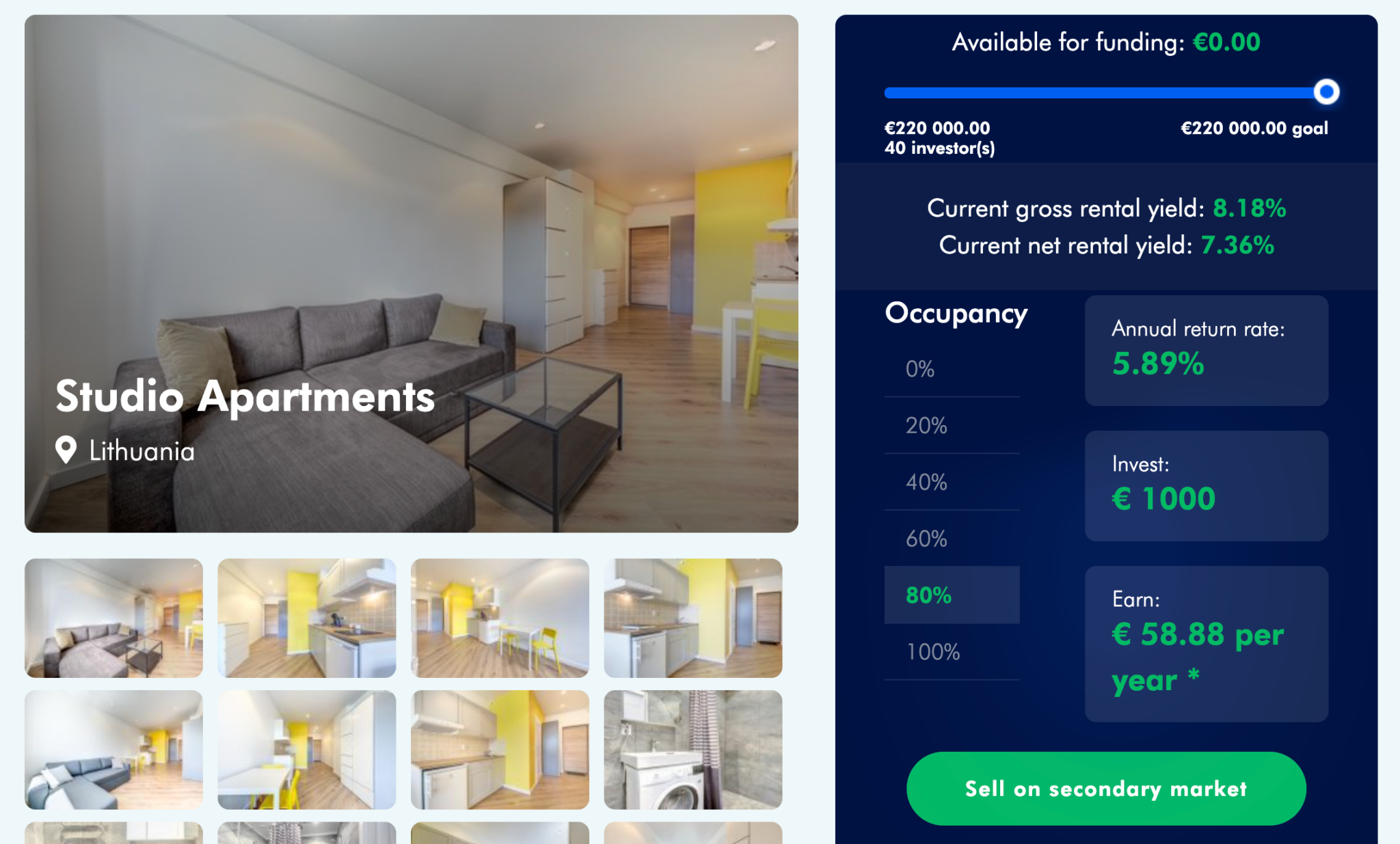

Pulling numbers from multiple tools, formatting them into something readable, and flagging anything that looks off. This was low-value, time-consuming work that happened every week across my businesses. AI does it better and faster than a person doing it manually, because it doesn’t get bored or make the kind of transcription errors that come from copy-pasting across spreadsheets. Getting that data in the first place is its own challenge, and there are now several ways to pull data from websites without writing code.

All four of these worked well early on. But they’re not where I should have started.

The Tasks I Should Have Automated First

With hindsight, the highest-value first moves were the ones I came to later. Three stand out.

Repetitive admin

Invoicing, follow-up sequences, status update emails, form processing. The stuff that takes 5 minutes each time but happens 30 times a week. I delayed automating this because it felt too mundane to bother with. That was a mistake. Across a year, the hours I spent on admin that AI could have handled are genuinely embarrassing to add up.

The rule I use now: if I’ve done a task more than ten times and could describe it in writing without ambiguity, it belongs in an automation, not on my calendar.

Scheduling and coordination

Booking meetings, managing back-and-forth over availability, sending reminders, following up on no-shows. These tasks are cognitively cheap but chronologically expensive. Every interruption to schedule something breaks concentration on actual work. AI agents handle this reliably, and the setup time is shorter than it sounds.

Customer FAQ responses

For WP RSS Aggregator, a significant share of the support inbox was the same questions arriving every week. New users asking how to set up a feed. People confused about the same two settings. Billing questions with standard answers. AI handles all of this now as a first pass, and the volume it absorbs means the human support time is concentrated on the edge cases that genuinely need thinking.

These three tasks had the best ratio of setup effort to time saved. They’re also low-risk: the cost of an occasional wrong answer is low, and there’s a human in the review loop for anything that needs it.

Tasks That Seemed Perfect for AI but Weren’t

Not everything goes to plan. A few things I thought would be easy automations turned out to be harder than they looked.

Complex decision-making

I tried using AI to help evaluate business decisions with multiple variables. Which market to enter. Whether to pursue a partnership. How to price a new product tier. The AI was often confident and always articulate, and frequently missed things that should have been obvious to anyone with context about the specific situation.

The problem isn’t that AI is stupid. It’s that complex decisions involve things that aren’t in any document: the history with a particular client, a feeling about where the market is going, a judgement call about risk that’s personal to me and my businesses. AI doesn’t have access to any of that. It answers based on what it can see, which for strategic decisions is never the whole picture.

Relationship-heavy communications

Any message where the subtext matters more than the text. Handling a frustrated long-term client. Negotiating with a supplier. Checking in with someone who’d gone quiet. I tried having AI draft these and almost always edited them so heavily that I might as well have written them from scratch.

The AI gets the words right but misses the tone. It doesn’t know the history. It can’t calibrate to the specific dynamic. There are tasks where humans are still genuinely better, and this category is most of them.

A Simple Framework for Picking Your First AI Task

After enough trial and error, I use four questions to evaluate whether a task is worth automating.

- Does it happen repeatedly? A task that occurs once is a poor automation candidate. One that occurs fifty times a week is a strong one. Volume is what makes the investment worthwhile.

- Can you define what good output looks like? If you can write down the criteria for a correct result, AI can probably meet them. If “good” is subjective in ways that matter, or changes with context in unpredictable ways, a human is still the better choice.

- What’s the cost of an error? AI makes mistakes. On a routine FAQ response, a small error is annoying but recoverable. On a contract negotiation or a client relationship, it might not be. Start with tasks where the error cost is low while you’re building trust in the system.

- Does it require judgement, or just execution? Execution is automatable. Judgement is not, at least not yet. Scheduling a meeting is execution. Deciding which meetings are worth taking is judgement. The line is blurrier than it seems at first, but it’s worth drawing carefully.

Run any candidate task through those four questions. If it scores well on all of them, start there. If it fails on number three or four, leave it for now and find something that passes.

The other thing I’d add: pick a task you currently do yourself, not one that sits with someone on your team. That way you can evaluate the output directly rather than guessing at quality.

The Compounding Effect

Here’s what nobody tells you at the start: the value of automating one task isn’t just the time that one task saves. It’s what happens to your attention once that task is off your plate.

When I stopped spending 45 minutes a day on email triage, I didn’t just get 45 minutes back. I got a different quality of attention for the first hour of the day, which changed what I was able to work on and how well I worked on it. That compounds in ways that don’t show up on a time-tracking spreadsheet.

The second effect is that once you’ve built one automation well, the next one is faster. You understand the tools. You know how to write prompts that produce consistent output. You know where the edge cases hide and how to design review steps. The infrastructure you build for the first task carries over to the second and the third.

I now have automated workflows running across email, content production, research, data reporting, scheduling, and customer support. None of them took more than a few days to implement. But the first one took considerably longer than that, because I had to build the thinking framework at the same time as the system.

That’s why starting well matters more than starting fast. The first automation teaches you how to automate. The stack you build from there is what determines whether AI becomes a genuine structural advantage in your business or stays a set of useful tools you occasionally poke at.

If you want to skip the trial-and-error phase and see what a working AI implementation looks like in practice, AgentVania is where I work with businesses on exactly this.

But if you’re going it alone, the short version is: start boring, start repetitive, start low-stakes. The interesting problems come later, once the boring ones are handled and you can actually see the shape of what’s left.

Leave a Reply