I’ve spent the last few months helping people build AI agents. Different niches, different stacks, different goals. One pattern keeps showing up, and it’s the same pattern I catch in my own agent projects: the agent has plenty of memory but very little knowledge.

The distinction matters more than I first thought it did, so let me draw the line clearly.

Memory is what the agent knows about itself. The conversations it’s had, the decisions it’s made, the documents you’ve fed it, the notes its operator has left for it. This is what most people mean when they say “agent memory” — vector stores, Obsidian vaults, scratchpads, long context windows. It’s persistent and private to the agent.

Knowledge is something different. Knowledge is what’s true about the world right now. What happened yesterday in the niche the agent is supposed to be expert in. What case study just dropped. What algorithm update just shipped. What tool just launched. None of it is in the agent’s memory, because it couldn’t be — it didn’t exist the last time the memory was updated.

You can’t solve the knowledge gap with a bigger vector store. It’s a different problem. And the cleanest fix I’ve found is so unfashionable that I felt slightly embarrassed the first time I recommended it: a curated RSS hub running on WordPress.

Stick with me for a minute.

Why the Obvious Approaches Don’t Work

When people realize their agent has a knowledge gap, they usually try one of three things.

The first is live web search at runtime. Every time the agent needs current information, it queries Perplexity, Tavily, Brave Search, or something similar. This works, sort of, but it’s slow (three to ten seconds per query) and the API costs add up fast — most of the search APIs sit in the $3-10 per thousand queries range. More importantly, the result quality is unpredictable. You’ll get a great answer one minute and SEO spam the next, and the agent has no way to tell the difference.

The second is custom scrapers. Pick the five sites you actually care about, scrape them on a schedule, store the results. This works until the source redesigns, or starts blocking you, or rate-limits your IP, or moves behind a paywall. I’ve burned more weekends fixing broken scrapers than I’d care to admit, and none of that time was spent on the actual agent.

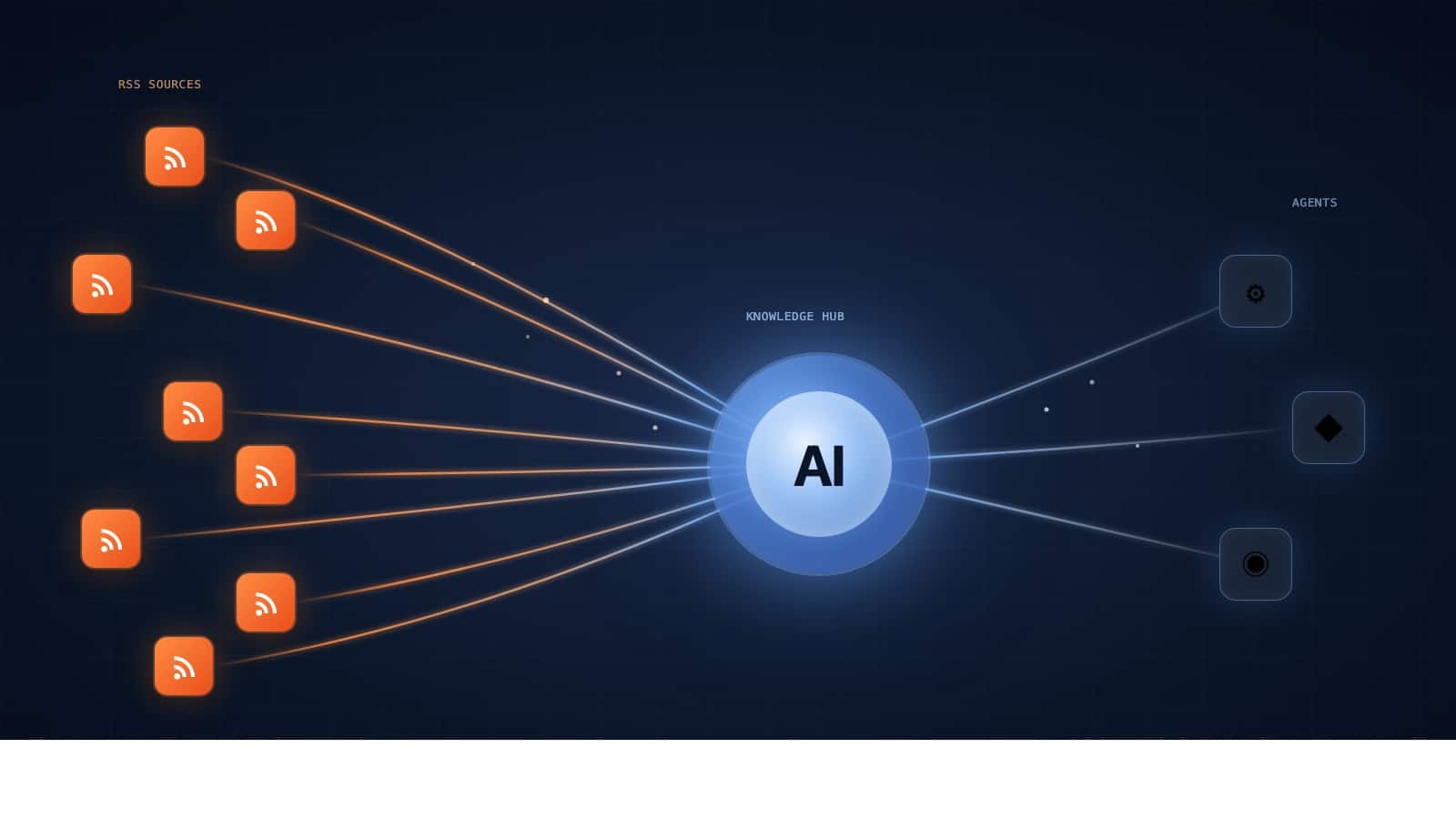

The third is feeding the agent twenty or thirty raw RSS feeds directly and asking it to figure things out. This is the closest approach to what I’m about to recommend, but it pushes too much work onto the agent. Feed parsing, deduplication, filtering, format normalization — all of it has to happen on every run, and all of it costs tokens. None of it is the work the agent was actually built to do.

There’s a fourth option, and it’s the one I keep coming back to. Build a curation layer between the raw sources and your agent. Run that layer on WordPress. Expose its output as a single clean RSS feed that the agent subscribes to.

Why WordPress, of All Things

I know how this sounds. WordPress is famously not the tool that AI builders reach for. But three things happen to be true at once, and together they make it the right pick.

First, WordPress has the best RSS aggregation plugin I’ve used: WP RSS Aggregator. It handles parsing, deduplication, scheduling, full-text import, keyword filtering, and category routing. I don’t want to write any of that code myself, and nothing in the Python ecosystem does all of it as well in a single package.

Second, WordPress automatically generates RSS feeds for everything you publish. Every category, every tag, every custom taxonomy is a subscribable feed by default. Your curated knowledge hub becomes an API your agent can talk to without you writing a single line of code on the output side.

Third, the entire stack runs on a cheap VPS. Total setup time: under an hour. I’ve built knowledge hubs in Python before, gluing together feedparser, a dedup layer, a scheduler, a filtering layer, and a serving layer. By the time I had something running, the WordPress version would have been in production for six months.

The cost math alone is hard to argue with. A WordPress knowledge hub runs a few dollars a month flat with unlimited reads. An agent hitting a search API 5,000 times a month is paying $25-50 for worse, slower, less curated data. You break even on day one.

Use the right tool. For this, the right tool is WordPress.

Walkthrough: Building an SEO Knowledge Hub

Let me make this concrete with the example I keep using: an SEO agent.

Half the agent demos I see right now are SEO agents. The pitch is always something like “this agent watches your site and tells you what to fix.” The problem is that SEO is one of the fastest-moving niches there is. Algorithm updates ship constantly. Best practices shift quarterly. AI search is rewriting the rulebook in real time. An SEO agent that doesn’t know about last week’s core update is giving advice from a year ago.

Here’s how I’d build the knowledge layer for it.

Step 1: Pick the Sources

Twenty to twenty-five high-quality SEO sources. Get their actual RSS feeds, not just their URLs. My shortlist would look something like this:

- Search Engine Land, Search Engine Roundtable

- Google Search Central blog, Google Search Status Dashboard

- Aleyda Solis, Lily Ray, Glen Allsopp (Detailed)

- Moz, Ahrefs, and SEMrush blogs

- r/SEO via reddit.com/r/SEO/.rss

- A few YouTube channels via their YouTube channel RSS feeds

- One or two podcast feeds that publish detailed show notes

Skip anything that’s mostly affiliate roundups, “we just launched” press releases, or recycled news. Treat source selection the way you’d treat training data selection, because functionally that’s exactly what it is.

Step 2: Set Up WordPress and Ingest

Spin up a WordPress install on whatever cheap host you prefer. Install WP RSS Aggregator. Add each source as a feed. Set polling intervals to every 30-60 minutes — more is overkill for any niche short of breaking news.

Enable full-text import for sources where the licensing allows it. Most editorial blogs do. Paywalled sources don’t, and you should respect that.

Step 3: Curate

This is the step that turns a dumb autoblog into something an agent can rely on. WP RSS Aggregator gives you several tools for the job:

- Keyword filters to drop noise. Exclude titles containing “sponsored”, “promoted”, “deal”, “we just launched”, and anything else that reliably marks PR fluff in your niche.

- Category routing to organize by topic. Algorithm updates go to /algorithm-updates, technical SEO to /technical, AI search to /ai-search, case studies to /case-studies.

- Optional manual approval queue for the top-tier sources you want completely pristine. Five minutes of human review a day is enough for most niches.

Garbage in, garbage out applies even more strongly to agents than to humans, because the agent has no taste and treats every input equally. The entire point of the curation layer is signal-to-noise.

Step 4: Expose the Feeds

WordPress generates the feeds for you automatically:

yoursite.com/feed/— everythingyoursite.com/category/algorithm-updates/feed/— high-signal subsetyoursite.com/category/case-studies/feed/— tactical winsyoursite.com/tag/google/feed/— Google-specific

You’ll want to subscribe to the curated subsets, not the firehose. The whole point of doing the curation work is to consume the cleaner output, not the raw stream.

Step 5: Wire It Into the Agent

This is the easy part, and it’s the same regardless of your stack:

- Subscribe to one of your curated category feeds

- Run it once a day, or once an hour for fast-moving niches

- Summarize each new item to two or three sentences with the LLM of your choice

- Write the summary into the agent’s knowledge store

In n8n, that’s three nodes: an RSS Trigger, an LLM summarization step, and a write-to-storage step (Postgres, Notion, Obsidian markdown, vector DB, whatever you’re already using). Twenty minutes from blank canvas to running pipeline.

In Python, it’s feedparser plus about fifteen lines.

If you prefer Cloudflare Workers, LangChain’s RSS document loader, Make, or just plain cron and curl, all of those work too. RSS is universal — whatever framework you’re using already knows how to consume it.

Now when the agent starts work each morning, it loads its memory (its own history) and its knowledge (yesterday’s curated SEO updates). It’s working with 24-hour-old context instead of 18-month-old training data. That’s a meaningful upgrade for almost no ongoing effort.

The MCP Angle

If you’re building agents in 2026, the most interesting wiring isn’t a daily cron job. It’s wrapping the hub as an MCP server.

The pattern looks like this: build a small MCP server that exposes two tools to the agent. Something like get_recent_knowledge(topic, since) and search_knowledge(query). Behind those tools, hit the WordPress REST API or the RSS feeds directly.

Now your agent decides when to pull, what topic to ask about, and how far back to look. The knowledge layer becomes native to the agent stack instead of bolted on with a cron job. You get on-demand knowledge access without paying search API prices, because you’re hitting your own VPS.

This is the version of the pattern that finally makes it click for agent builders who’ve never touched a CMS in their life. The CMS is implementation detail. The MCP server is the interface.

Common Mistakes

A few things I’ve seen people get wrong:

- Subscribing to the firehose feed instead of category feeds. You’ll drown the agent in noise and double its inference bill. Always subscribe to curated subsets.

- Skipping the curation step. “I’ll just feed all 30 sources directly.” No. Half of any 30-source feed is PR fluff. Curation is the entire value proposition.

- Storing full articles instead of summaries. The agent doesn’t need 3,000 words on every algorithm update. It needs the gist, indexed by date and topic.

- Forgetting to deduplicate across sources. The same news shows up in eight places. WP RSS Aggregator deduplicates by URL by default — make sure it’s on.

- Running the agent on every new item instead of batching daily. Most niches don’t move that fast. Daily is fine. Hourly is paranoid.

The Bigger Pattern

Once you separate memory from knowledge, you start seeing the gap in every agent you build. It’s not just SEO. Crypto agents, market intel agents, product research agents, customer support agents, competitive tracking agents — all of them benefit from a knowledge layer that updates daily and a memory layer that updates per session.

The agents that win in 2026 won’t be the ones with the biggest memory. They’ll be the ones with the best knowledge supply chain. And right now, that supply chain is something you have to build yourself, because nobody’s shipping it as a product yet.

A curated RSS hub on WordPress is the simplest version of that supply chain I’ve found. If you build agents and you don’t have a knowledge layer separate from your memory layer, build one this weekend. Pick an agent you’ve already shipped, pick the niche it’s supposed to be expert in, spin up WordPress, point WP RSS Aggregator at twenty sources, and wire the curated feed into the agent. An afternoon of work for a permanent upgrade in how current the agent’s worldview stays.

We do a lot of this kind of plumbing at AgentVania for clients who want their agents to actually know what’s happening in their industry. Whether you build it yourself or hand it off, the principle holds: separate memory from knowledge, build a clean source of truth for the knowledge side, and let the agent spend its tokens on the work it’s actually good at.

Leave a Reply